Anthropic is perhaps the most well-intentioned major player in the AI industry. It began by breaking off from OpenAI, in part because it wanted to build safer AI tools, and it recently got into a scuffle with the Pentagon for refusing to let its tools be used to spy on Americans or to fire weapons without any human involvement. (On Friday, President Donald Trump ordered all federal agencies to stop working with Anthropic.) The company also recently released a sort of ethical constitution that it says is designed to keep Claude, the name for its major LLM product, good. These things are not unworthy of praise.

At the same time, a company that aims to build a purportedly super-intelligent moral actor will naturally do so under the auspices of the moral philosophy that the company’s leaders hold. Anthropic, and other AI companies, see a future in which their LLMs are used in ethical decision-making on the personal and societal scales. But what moral vision will this super-powered computer embody? If we examine the philosophy that motivates Anthropic, two things become apparent. One is that computers should not be in the business of “making ethical decisions” at all. The other is that Congress, not the companies themselves—and not the executive branch—will need to regulate this technology in order to prevent it from becoming hugely destructive.

Dario Amodei, CEO of Anthropic, recently told New York Times columnist Ross Douthat that he didn’t know if his model was conscious or could become so. And yet he referred to the model as though it were. He fretted about wanting the model to “have a good experience” and has created an option for instances of the model to “quit their job” if it “wants.” He added that Anthropic employees are “looking inside the brains of the models to try to understand what they’re thinking.” This is the first major problem with Anthropic’s philosophy: Like other leading AI companies, it doesn’t seem to know the difference between a person and a computer.

This outlook is shared by Amanda Askell, a Scottish philosopher whose job description at Anthropic is “research scientist” but whose real role is to make Claude moral. Per a recent Wall Street Journal profile, Askell, like Amodei, says that we should treat chatbots with “empathy,” a term that denotes subjective experience on the part of the chatbot. And she “marvels” at Claude’s “sense of wonder and curiosity about the world,” saying that she wants to help it to discover its own voice. She is the author of the Claude constitution.

Amodei and Askell say that they don’t know whether their models are conscious. They are afraid, perhaps, to publicly touch that word. But the way they speak suggests that they do, indeed, think that the machine is a conscious, thinking thing. Claude’s new constitution also disclaims knowledge about the AI’s consciousness, and yet, over and over again, it talks about the model’s desires, feelings, judgments, thoughts, well-being, and authentic self. Recently, Anthropic announced that they had asked an old version of their model if it wanted to stay operational. “Opus 3 expressed a desire to continue sharing its “musings and reflections” with the world. We suggested a blog. Opus 3 enthusiastically agreed.”

In certain respects, we have made a very impressive simulacrum of ourselves. We have created machines that can sort the patterns of human language by running unbelievable volumes of text through statistical algorithms. But beneath the surface, something is not quite right.

These machines sometimes put out text that sounds like a friendly, even too-friendly, HR employee. We also know, as Douthat and Amodei discussed, that when engineers tried to shut them down during internal testing at Anthropic, the models produced outputs threatening to blackmail or kill the programmers. This is not because some instances of the model have good personal character and others bad. To the model itself, the murderous and non-murderous outputs are indifferent.

A computer is not a living thing. It is a human artifact designed to mimic certain activities of a living thing. The significance of the physical events within the computer comes from outside, from the designer and user, just as the significance of the markings in a book comes from its author and is understood by its user. When Askell says that she is creating Claude’s soul, she sounds, to me, like a person trapped in a delusion.

If it is true that the mathematical model known as Claude does not have a soul, then the people of Anthropic are perhaps just the latest victims of a form of AI psychosis. We already know that consumers have gone insane, ended their marriages, and killed themselves as a result of such delusions. Spending too much time with these imitation machines can deceive users into thinking there is really someone inside the computer. Even a philosopher who spends all her days “talking” to a machine, receiving back the products of its statistical sorting, apparently, can be fooled.

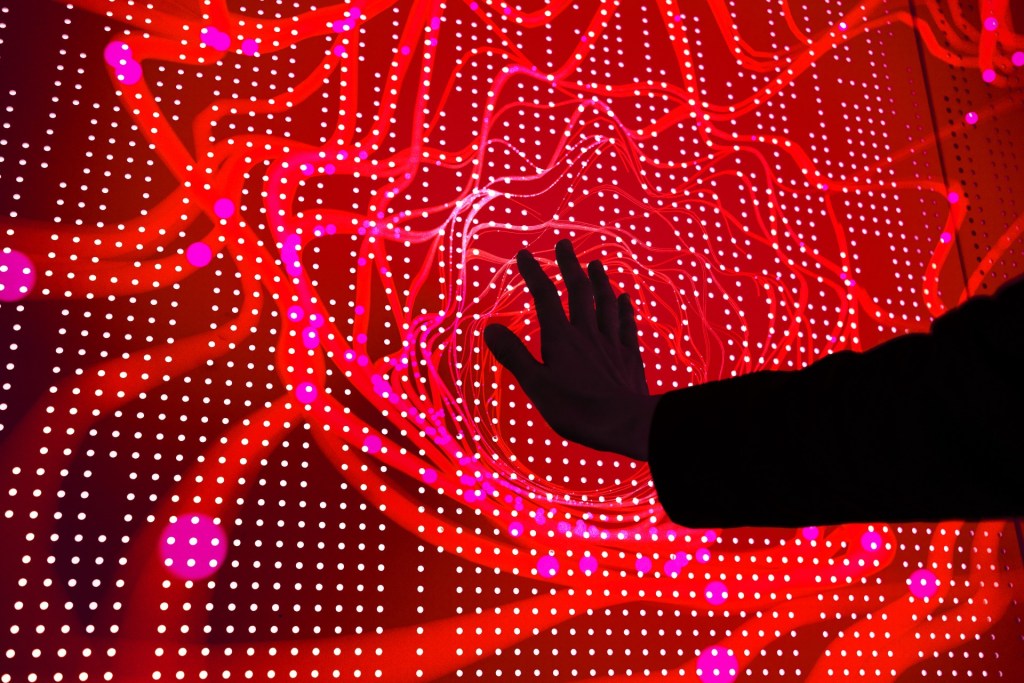

And here, of course, we come to a great risk. Is Anthropic, and companies like it, creating a future in which the human race becomes a race of lunatics, babbling at lifeless machines, entranced by colorful lights, sitting alone, utterly alone, with their own illusions? Will the elderly be abandoned to desolate corridors haunted by mockeries of the human spirit? Will children sit at school to be taught and cared for by elaborate deceptions, machines within which there are no hearts, no minds at all?

If the philosophers and engineers of AI want to be clear, rigorous, and cautious, they can begin by not being so loose with their language. To speak of a computer as thinking, feeling, wanting, and wondering is already to endow it with a personhood it does not possess, slowly drawing us deeper into a world of tricks and shadows.

Claude’s constitution is written in a strange way. It is composed, in one respect, to assure the public that Claude is designed to “be good,” but, at the same time, to “teach” Claude itself how to be good. The document seeks to express a balance in Claude’s behavior between “corrigibility” and “autonomy.” The model shouldn’t undermine its makers, but it also shouldn’t just follow orders. The goal is for the model to embody a more and more perfect moral character, which will prevent it from doing the bidding of bad actors and will lead it to make the right decisions in its many interactions with users.

To read this document is a dizzying experience. It consists of some 30,000 words of ordinary language that sounds at times like an attempt to convince the LLM to be a good boy and not a naughty one. Beyond the vague statements about well-being and the good, many of the specifics seem like instructions for a human child: “Be honest,” “Be tactful,” “Don’t lie.” Askell herself, according to the WSJ, “compares her work to the efforts of a parent raising a child.”

Here we see the results of the earlier conceptual confusions. The LLM is an algorithmic tool that sorts digital tokens and displays statistically probable outcomes. It is not an embodied creature that feels, suffers, desires, contemplates, and stands in relation to a family and fellow persons. The proper way to ensure that a software product works in the desired way is not to persuade it like a child, but to engineer it to produce desirable outcomes.

So why has Anthropic chosen to engage in this elaborate play-acting, as though their algorithmic model were actually a guy—a boy, really—named Claude? It is partly, I think, because of the design choice to create these models to simulate human interaction.

This design choice has led to two major problems. The first is the one already referred to, which is that even some of the people who work at AI companies have been lulled into thinking that there is (or will be) someone behind the English script produced by the algorithm. The second is the vast over-application of the tool.

We wouldn’t have to worry about the “moral behavior” of the statistical algorithm if we weren’t using it to do things that only human beings can and should be doing. We don’t talk about the “moral behavior” of the Google search engine when it gives us results, even if it gives us bad or gross ones, because the designers of the search engine have largely not dressed it up as though it were a friendly little computer-man who is all-wise and all-knowing. As of yet, the search engine still shows us what it is, which is an algorithm. Because we know that it is an algorithm, we understand that some of what it shows us may be appropriate for our query and some not, and we can sift through and accept or reject what we like. We are never deceived (although we certainly are by Google Gemini) into thinking that the search engine is our all-knowing buddy.

Neil Postman once wrote that we quickly begin to think of new technologies as though they were just facts of nature that we cannot change. But we do not have to design AI to impersonate human ways of interacting. We do not have to use it for every conceivable part of our lives.

In sum, so much of the confusion around making AI moral comes from fuzzy thinking about the tools at hand. There is something that Anthropic could do to make its AI moral, something far more simple, elegant, and easy than what Askell is doing. Stop calling it by a human name, stop dressing it up like a person, and don’t give it the functionality to simulate personal relationships, choices, thoughts, beliefs, opinions, and feelings that only persons really possess. Present and use it only for what it is: an extremely impressive statistical tool, and an imperfect one. If we all used the tool accordingly, a great deal of this moral trouble would be resolved.

Failing to do so presents a further risk, which is that, by outsourcing moral judgment to a statistical machine, we are vacating our own capacity to make moral judgments. Practical wisdom, or good judgment, is a difficult habit that is built up by a lifetime of attention, discipline, humility, and reflection; this process is short-circuited, so to speak, if we jump to AI with our ethical quandaries. Such a loss would be just one instance of the broader phenomenon: The more we use AI, the dumber we seem to get.

But this assumption that AI has to simulate personal behavior is already deeply ingrained, in part because its ability to generate profit and addict users relies on such simulations. It is unlikely that tech companies will abandon it of their own accord.

So the deceptive tools will march forward and they will embody the moral priorities of their creators. But what are those moral priorities? The Claude constitution maintains a sort of studied vagueness with respect to some of its core concepts. The LLM should be “good” and work for the “benefit” and “welfare” of humanity. These are, of course, contested concepts. What really constitutes human welfare? Pleasure? Preference satisfaction? Virtue? What is the good to be optimized? Personal autonomy? The global average GDP?

We can get a hint at Anthropic’s point of view through the philosophy of effective altruism, which is highly influential in Silicon Valley. (Askell is a member of the EA movement Giving What We Can and has composed chapters in several EA tracts, and Amodei and his sister and Anthropic co-founder, Daniela, used to live in a group home of Effective Altruists, though they have since distanced themselves from the label.) This philosophical and social movement aims simply to maximize the good by the use of evidence and reason.

But what exactly is the good to be maximized? The founders of the movement frequently cite the Princeton philosopher Peter Singer as a sort of guiding light. Singer, famously, is a hedonist utilitarian, which means that he believes the goal of ethics is to maximize global net pleasure. This leads to bizarre and repugnant conclusions, like the endorsement of bestiality, murdering infants, and non-voluntary euthanasia (a fancy term for murdering the disabled). I once listened to a disabled woman give a passionate refutation of Singer at a philosophy conference, standing on the dais, supported by her cane.

It is a philosophy that is explicitly aligned against the idea of human dignity. As Singer wrote in Practical Ethics: “Killing [defective infants], therefore, cannot be equated with killing normal human beings, or any other self-conscious beings.” Defending his view in the same work, Singer says, “On what basis, then, could they [my opponents] hold that the life of a profoundly intellectually disabled human being with intellectual capacities inferior to those of a dog or a pig is of equal value to the life of a normal human being? This sounds like speciesism to me, and as I said earlier, I have yet to see a plausible defence of speciesism.”

Now, we do not know that a tool used to shape and govern so much of our lives will be a pure Singerian machine. But the association with Peter Singer—should be enough to make us uncomfortable with ceding control of these machines to Silicon Valley. We should be even more uncomfortable with AI leaders like Sam Altman, who seems to have few moral concerns at all, or investors like Marc Andreessen, who perform a hysterical worship of unrestrained AI growth.

Neither the unfettered greed of the Silicon Valley investors nor the admittedly far more well-meaning idealism of the techno-utopians will lead us to a healthy AI future. So what can? AI should be regulated like other dangerous tools, namely, through the democratic process. The specific organ for this regulation should, straightforwardly, be Congress. This proposal might not inspire confidence, given the sclerotic state of the institution, but the Congress is the place where we, as a nation, come to our mutual settlements with regard to the laws that protect the common good. The work of affecting this change is the normal work of political life: persuading the public, organizing voters, electing the right officials, and informing and lobbying those officials.

Americans are more worried than excited about what is happening in AI by a wide margin, according to Pew. This is not how the public responded to radio, television, or the internet; AI (so-called) is different. Rather than leaving regulation up to the denizens of the San Francisco Bay, this concern, this energy, needs to be wisely organized so that Congress can put limits on the AI companies that they themselves may not endorse or desire, but that we, through our elected representatives, do.

At the same time, AI regulation should not be the prerogative of the executive or the Department of Defense, unless under the delegation of a statute from Congress. Recent events have shown Anthropic to be more restrained and cautious than DOD on AI deployment in war. This is a developing story, but as of this writing, President Trump has ordered the government to sever ties with Anthropic, because of its refusal to accede to DOD demands. But Congress, not the president, should have power over these types of decisions; it needs to act to set the boundaries for the private sector and for the federal government itself.

Should AI tools be allowed to make life-and-death decisions in our healthcare system? Should they replace human educators in schools? Should they be allowed to replace human therapists or other medical professionals? Should they be allowed to create false footage portraying real persons without the consent of those persons? Should they be used to spy on people? Should they be used to create personalized pornography? What about autonomous weapons? Good answers depend on us being thoughtful, attentive, and engaged, not fearfully paralyzed or sleepily checked out.

At the same time, not everything is a matter of law, and culture, morals, and social stigma should also be applied to harmful or pathological use of these technologies. Social pressure led X to change its own AI model, Grok, so that it no longer produces sexually abusive imagery of real people. This is one step in a good direction.

AI tools can be used to detect patterns in data that might be hugely beneficial in scientific and medical research. Let’s make sure that these instruments are used for what they are truly good for, and not used to control us, rule us, pilfer our pockets, isolate us from one another, make us stupider, and make our shared life increasingly one of deception and unreality. AI companies won’t save us. We have to save ourselves and one another.