Authored by Jon Fleetwood via JonFleetwood.com,

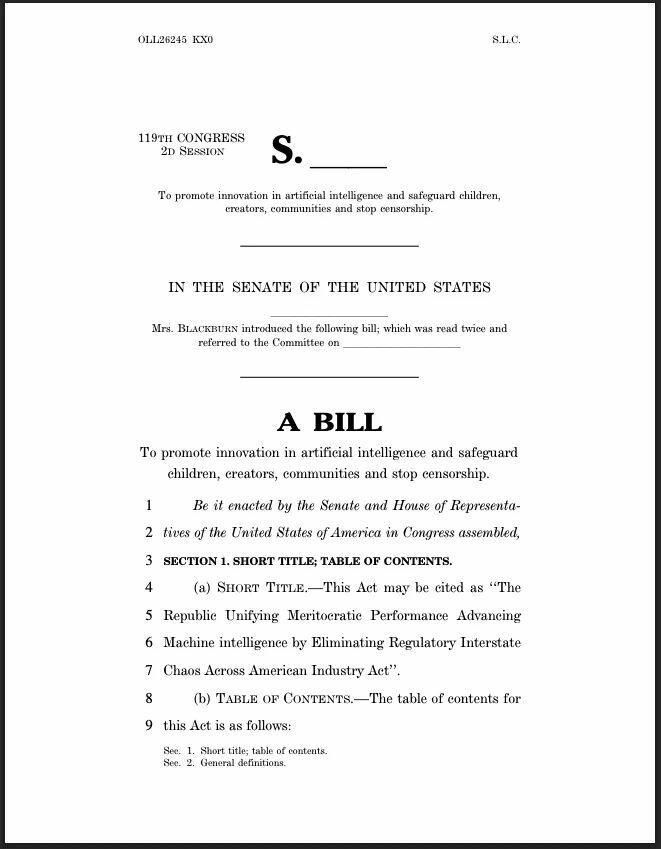

U.S. Senator Marsha Blackburn has released a 291-page legislative framework that would repeal Section 230, expand liability across the artificial intelligence ecosystem, and establish a unified federal rulebook governing how AI systems are built, deployed, and controlled in the United States.

The proposal—titled the TRUMP AMERICA AI Act—is being presented as a pro-innovation, pro-safety measure designed to “protect children, creators, conservatives, and communities” while ensuring U.S. dominance in the global AI race.

But the actual structure of the bill reveals a comprehensive system that centralizes regulatory authority, expands legal exposure for platforms, and creates new mechanisms for controlling AI outputs and digital information flows.

For independent journalists and publishers operating on platforms like Substack, the repeal of Section 230 shifts the risk upstream.

Platforms would no longer be shielded from liability tied to user-generated content, meaning they must evaluate whether hosting certain reporting could expose them to lawsuits.

In practice, that creates pressure to restrict or deprioritize content that could be framed as causing harm—particularly reporting on public health, government programs, or other high-stakes issues—regardless of whether it is sourced or accurate.

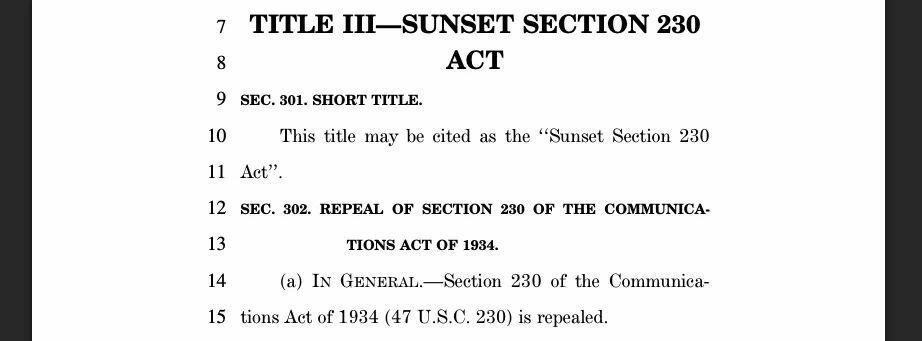

Section 230 Repeal Removes Core Liability Shield

At the center of the bill is the full repeal of Section 230 of the Communications Act—long considered the legal foundation of the modern internet.

Section 230 protects online platforms like Substack from being treated as the publisher of user-generated content, shielding them from most civil liability over what users post.

The Blackburn framework would eliminate that protection by repealing Section 230 entirely.

In its place, the bill creates multiple new avenues for liability, allowing enforcement not just by federal regulators, but by state attorneys general and private actors.

Platforms and AI developers could face legal action for “defective design,” “failure to warn,” or producing systems deemed “unreasonably dangerous.”

The practical effect is that once liability protections are removed, platforms are no longer free to host content neutrally.

They must actively manage and restrict content—or risk being sued.

‘Duty of Care’ Standard Introduces Subjective Enforcement Trigger

The bill imposes a “duty of care” requirement on AI developers, mandating that they prevent “reasonably foreseeable harms” arising from their systems.

That language is broad and undefined.

What qualifies as “harm,” what is “foreseeable,” and when an AI system is considered a “contributing factor” are not fixed standards.

They are determined after the fact by regulators, courts, and litigants.

This creates a retroactive enforcement model where AI outputs can be judged unlawful based on evolving interpretations, forcing companies to preemptively restrict what their systems are allowed to generate.

Federal ‘One Rulebook’ Replaces State-Level Variation

Blackburn’s framework repeatedly emphasizes the need to eliminate what she calls a “patchwork of state laws” and replace it with a single national standard.

That shift consolidates authority at the federal level, empowering agencies such as the Federal Trade Commission, Department of Justice, National Institute of Standards and Technology (NIST), and Department of Energy to define and enforce AI rules across the country.

Rather than multiple local jurisdictions experimenting with different approaches, the bill establishes a centralized governance model for AI systems.

Algorithmic Systems & Content Delivery Brought Under Regulation

Under the “Protecting Children” provisions, the bill directly targets the design features of digital platforms, including:

-

Personalized recommendation systems

-

Infinite scrolling and autoplay

-

Notifications and engagement incentives

Platforms would be required to modify or restrict these features to prevent harms such as anxiety, depression, and “compulsive usage.”

This is not limited to content moderation.

It regulates how information is ranked, delivered, and amplified—placing core algorithmic systems under federal oversight.

Watermarking & Content Provenance Standards Introduced

The bill directs NIST to develop national standards for:

-

Content provenance (tracking origin of digital content)

-

Watermarking of AI-generated media

-

Detection of synthetic or modified content

It also requires AI providers to allow content owners to attach provenance data and prohibits its removal.

These provisions create a technical infrastructure for identifying and tracking the origin and authenticity of digital content across platforms.

New Copyright & Likeness Liability for AI Training and Outputs

The framework explicitly states that using copyrighted material to train AI models does not qualify as fair use, opening the door for widespread litigation against AI developers.

It also establishes liability for the unauthorized use of an individual’s voice or likeness in AI-generated content, and extends that liability to platforms that host such material if they are aware it was not authorized.

Together, these provisions expand legal exposure across both the training and deployment phases of AI systems.

Mandatory Workforce Surveillance & AI Risk Monitoring

The bill requires companies to report quarterly data on AI-related job impacts, including layoffs, hiring shifts, and positions eliminated due to automation.

It also establishes a federal “Advanced Artificial Intelligence Evaluation Program” to monitor risks such as:

These measures create ongoing federal visibility into both the economic and operational effects of AI deployment.

National AI Infrastructure & Public-Private Control Systems

The proposal includes the creation of the National Artificial Intelligence Research Resource (NAIRR), a shared infrastructure providing:

-

Compute power

-

Large datasets

-

Research tools

This system would be governed through a public-private structure, combining federal agencies and private sector contributors.

Control over compute, data access, and infrastructure places the direction of AI development within a centralized framework.

Structural Shift: Liability as the Enforcement Mechanism

While the bill is framed as reducing regulatory complexity, its core enforcement mechanism is not deregulation but liability expansion.

By removing Section 230 and introducing broad legal exposure, the framework creates a system where platforms and AI developers must continuously assess legal risk tied to content, outputs, and system behavior.

That shifts enforcement away from direct government censorship and toward a model where companies self-regulate under constant threat of litigation.

Bottom Line

Blackburn’s AI framework restructures the legal conditions under which information is allowed to exist online.

By removing Section 230 and expanding liability across platforms, the bill shifts risk away from the speaker and onto the infrastructure that distributes their work.

That means companies like Substack are no longer simply hosting content—they are legally exposed to it.

In that environment, the question is no longer whether reporting is accurate or sourced, but whether hosting it could trigger legal risk.

The predictable result is preemptive restriction: platforms limiting reach, tightening policies, or removing content that could be framed as harmful—especially reporting on public health, government programs, or other high-stakes issues.

For independent journalists, the pressure point is distribution.

The bill creates a system where controversial or high-impact reporting does not need to be banned outright.

It only needs to become too risky for platforms to carry.

In effect, control over liability becomes control over visibility.